- Home

- Publications

- PAGES Magazine

- The Last Glacial Ocean: The Challenge of Comparing Multiproxy Data Synthesis With Climate Simulations

The last glacial ocean: The challenge of comparing multiproxy data synthesis with climate simulations

Jonkers L, Rehfeld K, Kageyama M and Kucera M

Past Global Changes Magazine

29(2)

82-83

2021

The Last Glacial Maximum (LGM) offers paleoscientists the possibility to assess climate model skill under boundary conditions fundamentally different from today. We briefly review the history and challenges of LGM data–model comparison and outline potential new future directions.

The Last Glacial Maximum

The Last Glacial Maximum (LGM; 23,000–19,000 years ago) is the most recent time in Earth's history with a fundamentally different climate from today. Thus, from a climate modeling perspective, the LGM is an ideal test case because of its radically different and quantitatively well-constrained boundary conditions.

Reconstructions provide quantitative constraints on LGM climate, but they are often archived in isolation. Paleoclimate syntheses bring individual reconstructions together and offer a large-scale, even global, perspective on paleoclimate that is impossible to obtain from single observations. The first synthesis of the LGM surface temperature field, carried out within the Climate: Long range Investigation, Mapping, and Prediction (CLIMAP) project in the 1970s, served as boundary conditions for atmosphere-only models (CLIMAP Project Members 1976), which required full-field seasonal reconstructions. Later, with the advent of coupled ocean-atmosphere models, the information from paleoclimate archives could be used to benchmark simulations.

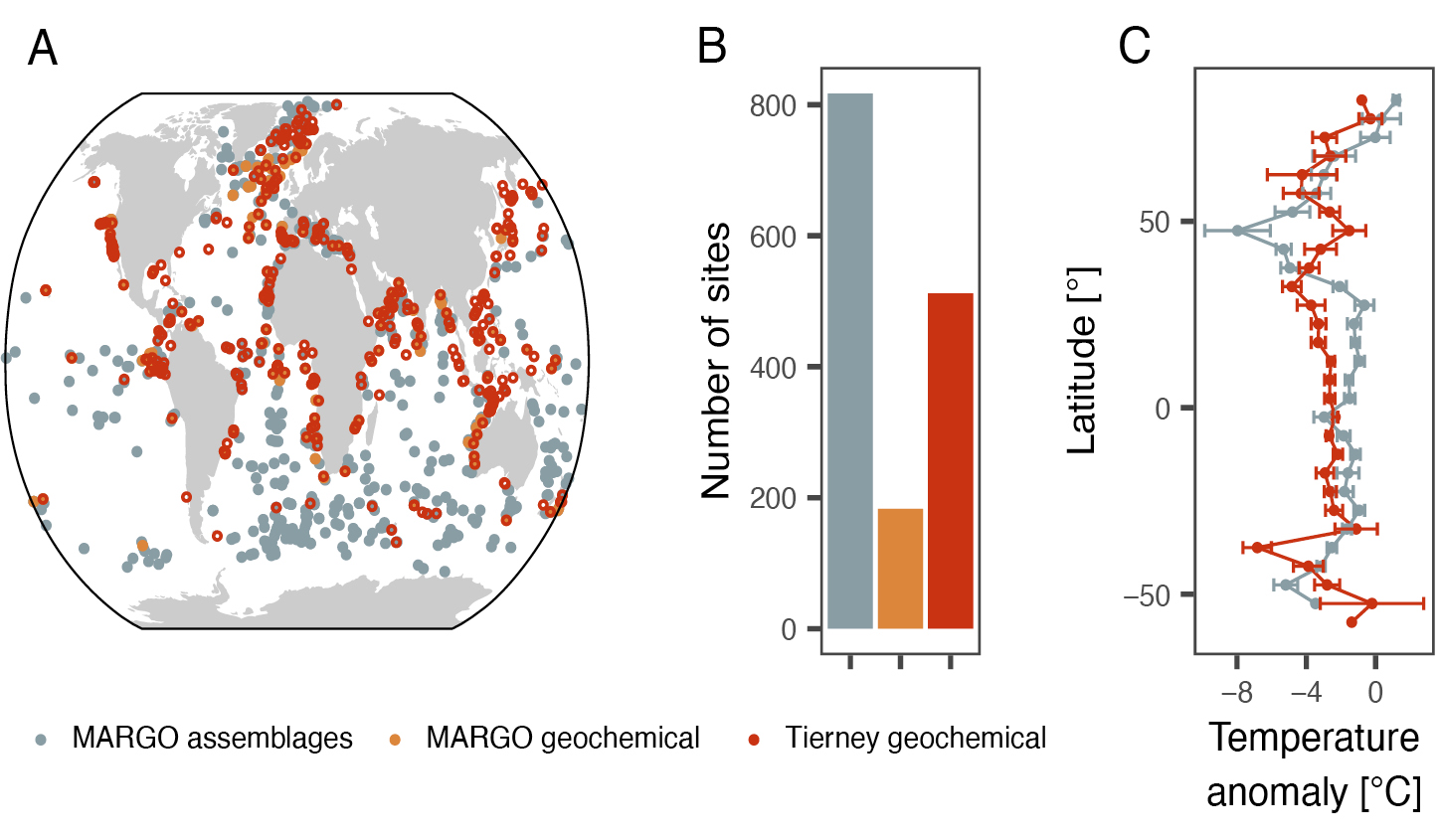

Since CLIMAP, the data coverage has increased tremendously and new (geochemical) proxies for seawater temperature have been developed and successfully applied. Thanks to synthesis efforts, the LGM is now arguably the time period with the most extensively constrained sea-surface temperature field prior to the instrumental period (MARGO project members 2009; Tierney et al. 2020).

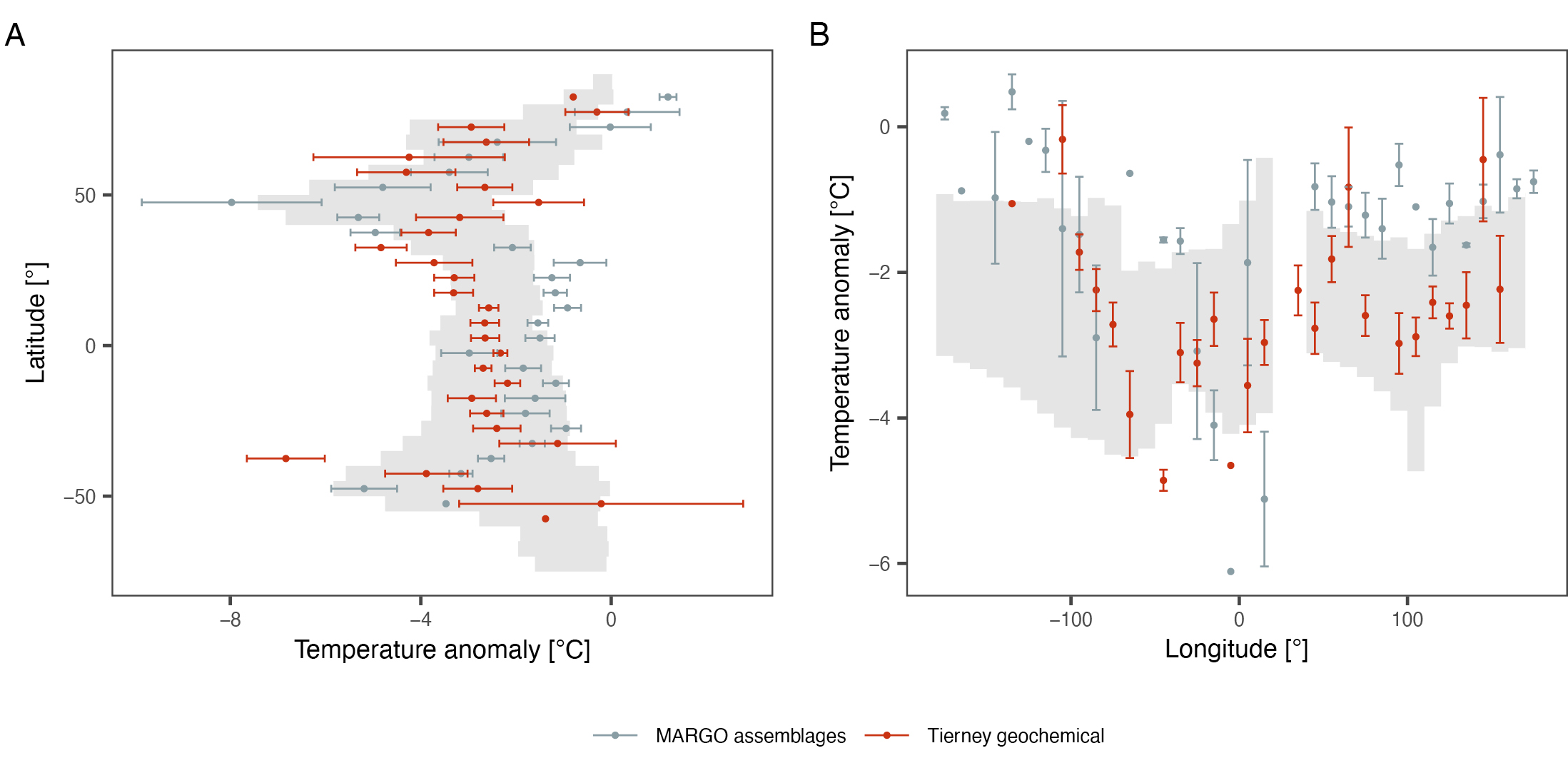

Climate models largely capture the reconstructed global average LGM cooling of the oceans (Kageyama et al. 2021; Otto-Bliesner et al. 2009), thus allowing us to constrain climate sensitivity (Sherwood et al. 2020). However, the average LGM cooling emerges from a signal of marked variability (MARGO project members 2009; Rehfeld et al. 2018), a reflection of climate dynamics that cannot be resolved from the global mean. The reconstructions indicate pronounced regional patterns of the oceanic temperature change, with, amongst others, pronounced gradients in the cooling in the North Atlantic (MARGO project members 2009). It is in the spatial patterns of LGM temperature change where there are the largest differences among the individual proxies and models, as well as between the proxies and the models (Kageyama et al. 2021).

The causes—and hence implications—for these differences (and model–data mismatch in general) arise from both the reconstructions and the models. It is important to resolve the underlying reasons for the differences in order to increase the relevance of paleodata model comparison for future predictions.

Main challenges

A crucial first step to assess (any) mismatch between paleoclimate reconstructions and simulations is to quantify the uncertainty and bias of both. Without this, the reason for differences (or the meaning of agreement) will remain difficult to elucidate.

Paleoclimate records preserve an imprint of past climate that is affected by uncertainty in the chronology of the archives and in the attribution of the signal together with additional noise that may be unrelated to climate. Previous work suggests that—at least for the LGM—dating uncertainties and internal variability are not the largest source of error for the reconstructions (Kucera et al. 2005). This is likely because sediment records are averaged enough across the four millennia that span the LGM, and the dating aided by the radiocarbon technique is sufficiently reliable to identify the target time slice. Instead, the attribution of the reconstructed temperatures to specific water depths or seasons, as well as the influence of factors other than temperature on the proxy signals, remain problematic and likely explain part of the difference among proxies (Fig. 1c).

Climate model simulations, on the other hand, are physically plausible realizations of climate dynamics that are simplifications of reality, a fundamental aspect that should not be forgotten during data–model comparison. Models are generally calibrated to instrumental data so that LGM simulations are independent tests of their ability to represent a climate different from the present. Model design choices lead to differences among the simulations of LGM temperature that are on a par with differences among proxies (Fig. 2). Among these design choices, the coarse spatial resolution of climate models leads to difficulties in accurately resolving small-scale features, such as eastern boundary currents or upwelling systems: areas where the data–model mismatch tends to be large (Fig. 2). Moreover, modelers have to make choices in terms of boundary conditions (in particular ice sheets) and in the set-up of the model used (e.g. including dynamic vegetation, interactive ice sheets). And finally, most simulations of LGM climate are performed as equilibrium experiments (without history/memory), whereas in reality the LGM was the culmination of a highly dynamic glacial period.

Ways forward

Proxy attribution can be addressed directly through increased understanding of the proxy sensor. Most seawater temperature proxies are based on biological sensors, and better understanding of their ecology is likely to help constrain the origin of the proxy signal (Jonkers and Kucera 2017). Alternatively, uncertainty in the attribution may also be accounted for in the calibration (e.g. Tierney and Tingley 2018). However, neither approach explicitly considers the dependence of the proxy sensor itself on climate. Forward modeling of the proxy signal is a promising way to address this issue, but sensor models for seawater temperature proxies are still in their infancy (Kretschmer et al. 2018).

Apart from the proxy attribution uncertainties, reconstructions are spatially distributed in an uneven way. For both historical and geological reasons most of the reconstructions stem from the North Atlantic Ocean and from continental margins (Fig. 1) and despite almost half a century of focus on reconstructing the LGM temperature field, progress in filling the gaps has been slow. This is in part due to the depositional regime that characterizes large parts of the open ocean. Sedimentation rates and/or preservation in these areas are often insufficient to resolve the LGM. Therefore, it would seem that rather than aiming for a reconstruction of global mean temperature, a more fruitful approach would be to focus on areas where the reconstructions can better constrain the simulations, for instance in areas where models show the largest spread or bias.

At the same time, uncertainty, including structural uncertainty in model simulations has to be considered more explicitly. It is now more and more common to run large ensembles of model simulations, thereby sampling parametric uncertainties and/or uncertainties in scenarios, or in initial or boundary conditions. Such an approach, together with the multi-model approach that PMIP has fostered, helps to better describe the uncertainty of the model simulations, and better quantify model–data (dis)agreement. Taking uncertainty in the models and in the paleodata into account, simulations and reconstructions can be integrated through data assimilation (Kurahashi-Nakamura et al. 2017; Tierney et al. 2020). Offline approaches to obtain full field reconstructions are valuable but difficult to validate. Furthermore, such methods require some overlap between reconstructions and simulations to obtain reconstructions that are not only physically plausible but also realistic. Online data assimilation is possibly the most direct way of using the strengths of the models and the data to learn about the climate system.

Outlook

Avenues to increase the value of paleoclimate data to inform climate models would be to better exploit the multidimensionality of the paleorecord. Archives of marine climate often hold more information than just temperature. Because many archives co-register different climate-sensitive parameters, (age) uncertainty can be reduced to some extent. Thus, approaches carrying out comparison, or data assimilation, in multiple dimensions (Kurahashi-Nakamura et al. 2017) are likely to provide more constraints on the reason for model–data discrepancies.

Although the LGM time slice has proved a useful and effective way to compare models and data, the paleoclimate record is in fact four-dimensional, as it traces changes through time and space. Climate models can now increasingly simulate transient change over long periods of time. The future of climate model–data integration therefore likely belongs to timeseries comparisons (Ivanovic et al. 2016). Timeseries can be used to assess the temporal aspect of climate variability and the large-scale evolution of climate. With the increasing availability of multi-proxy/parameter data synthesis (Jonkers et al. 2020), even the prospect of four-dimensional data–model comparison is coming closer to reality.

affiliations

1MARUM Center for Marine Environmental Sciences, University of Bremen, Germany

2Geo- und Umweltforschungszentrum, Tübingen University

3Institute of Environmental Physics, Heidelberg University, Germany

4Laboratoire des Sciences du Climat et de l'Environnement, Institut Pierre-Simon Laplace, UMR CEA-CNRS-UVSQ, Université Paris-Saclay, Gif-sur-Yvette, France

contact

Lukas Jonkers: ljonkers marum.de

marum.de

references

CLIMAP Project Members (1976) Science 191: 1131-1137

Ivanovic RF et al. (2016) Geosci Model Dev 9: 2563-2587

Jonkers L, Kucera M (2017) Clim Past 13: 573-586

Jonkers L et al. (2020) Earth Syst Sci Data 12: 1053-1081

Kageyama M et al. (2021) Clim Past 17: 1065-1089

Kretschmer K et al. (2018) Biogeosciences 15: 4405-4429

Kucera M et al. (2005) Quat Sci Rev 24: 951-998

Kurahashi-Nakamura T et al. (2017) Paleoceanography 32: 326-350

MARGO project members (2009) Nat Geosci 2: 127-132

Otto-Bliesner BL et al. (2009) Clim Dyn 32: 799-815

Rehfeld K et al. (2018) Nature 554: 356-359

Sherwood SC et al. (2020) Rev Geophys 58: e2019RG000678

Tierney JE, Tingley MP (2018) Paleoceanogr Paleoclimatol 33: 281-301